Productboard Spark: AI built for PMs. Now available & free to try in public beta.

Learn moreProductboard Spark: AI built for PMs. Now available & free to try in public beta.

Learn moreProductboard Spark: AI built for PMs. Now available & free to try in public beta.

Learn moreProductboard Spark: AI built for PMs. Now available & free to try in public beta.

Learn more

Remember when you, as a data scientist, worked on an exciting idea or project, conducted exhaustive data exploration and cleaning, tested super interesting modeling techniques, and then hoped to somehow make it to production? Most of us know how this story continues. Despite the fact that companies are very excited to introduce Machine Learning capabilities, there are still many business adoption pain points and technical challenges along the way.

Getting ML into production is hard. Who can help you ship it? Who would own, monitor and maintain the models? Would you just hand over your code to another team and your job would be done? Or the line ends at Jupyter Notebook? Nowadays, working on an ML problem is important, but it’s just one piece of the puzzle in a successful machine-learning project journey.

At Productboard, we always considered Machine Learning as a complex end-to-end mission. In other words, data scientists should be able to translate business problems to data problems, research different types of solutions as well as provide decent code and make sure a solution is behaving as designed.

To this end, we started exploring MLOps and experimenting with ML as engineers. This includes packaging production-ready code, setting up CICD pipelines, deployment and monitoring production services. No worries, product implementation is still a task for our software engineers 🙂

The beginning of our MLOps journey began after we finished researching our pilot ML project. We ended up with standard ML workflows that pull, clean and retrain models for thousands of customers every day. So we started looking for a place under the sun Kubernetes where our ML pipelines can happily prosper.

Our requirements for such a framework were:

After undertaking quick ML landscape research, we ended up with the following shortlist (as of the beginning of 2021):

After hands-on testing of Airflow and Kubeflow, we had no major objections to choosing either of these tools. Kubeflow offers more than just tasks orchestration, but we felt Airflow had a steeper learning curve with a focus on general workflows rather than specializing on ML.

After more than one year of using Airflow, we are enjoying the ride despite a few hiccups that were mostly due to our inexperience. We are using a user community helm chart for easy out-of-the-box Kubernetes deployment. Recently, the official helm chart and Airflow 2 version were finally released.

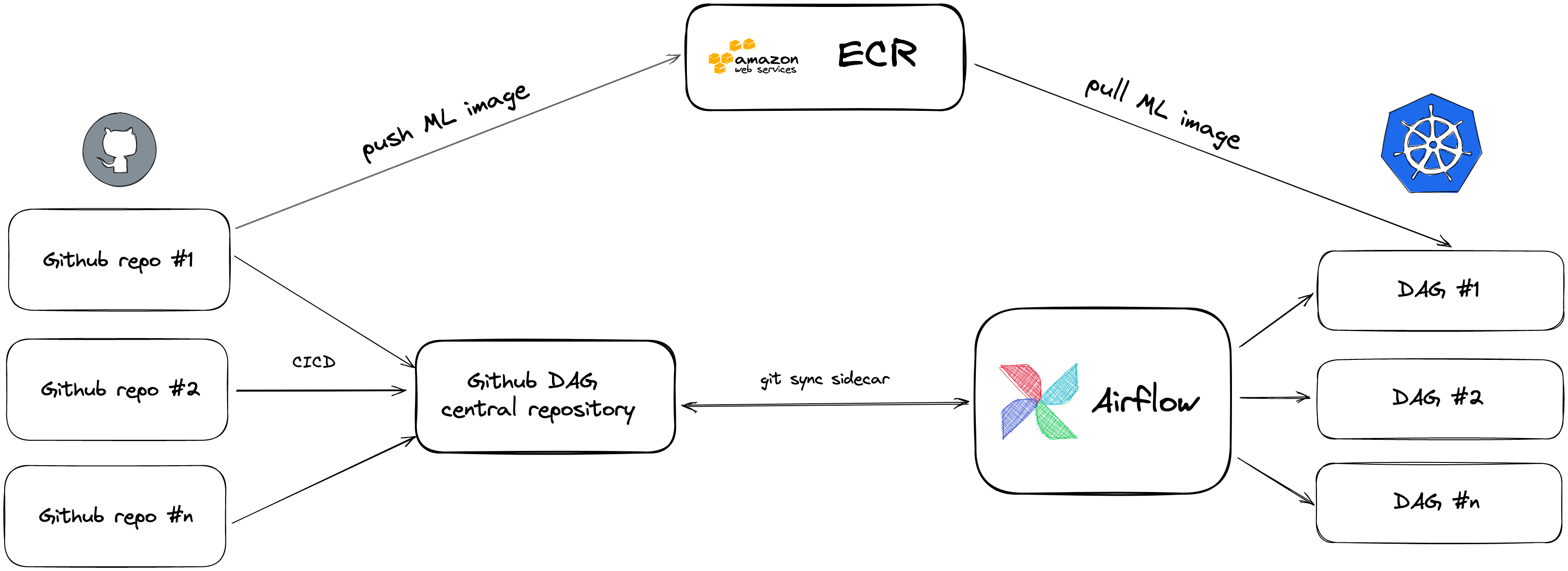

Our setup is rather basic. We have multiple ML code repositories and push DAGs to one central repository using CICD pipelines. Our production code is packaged in Dockerfiles, built and pushed to the central image repository. Airflow is syncing the DAGs from the central repository and images with the ML code from ECR and runs the tasks based on the DAGs definition. This gives us endless opportunities to run any code that could be packaged in Docker.

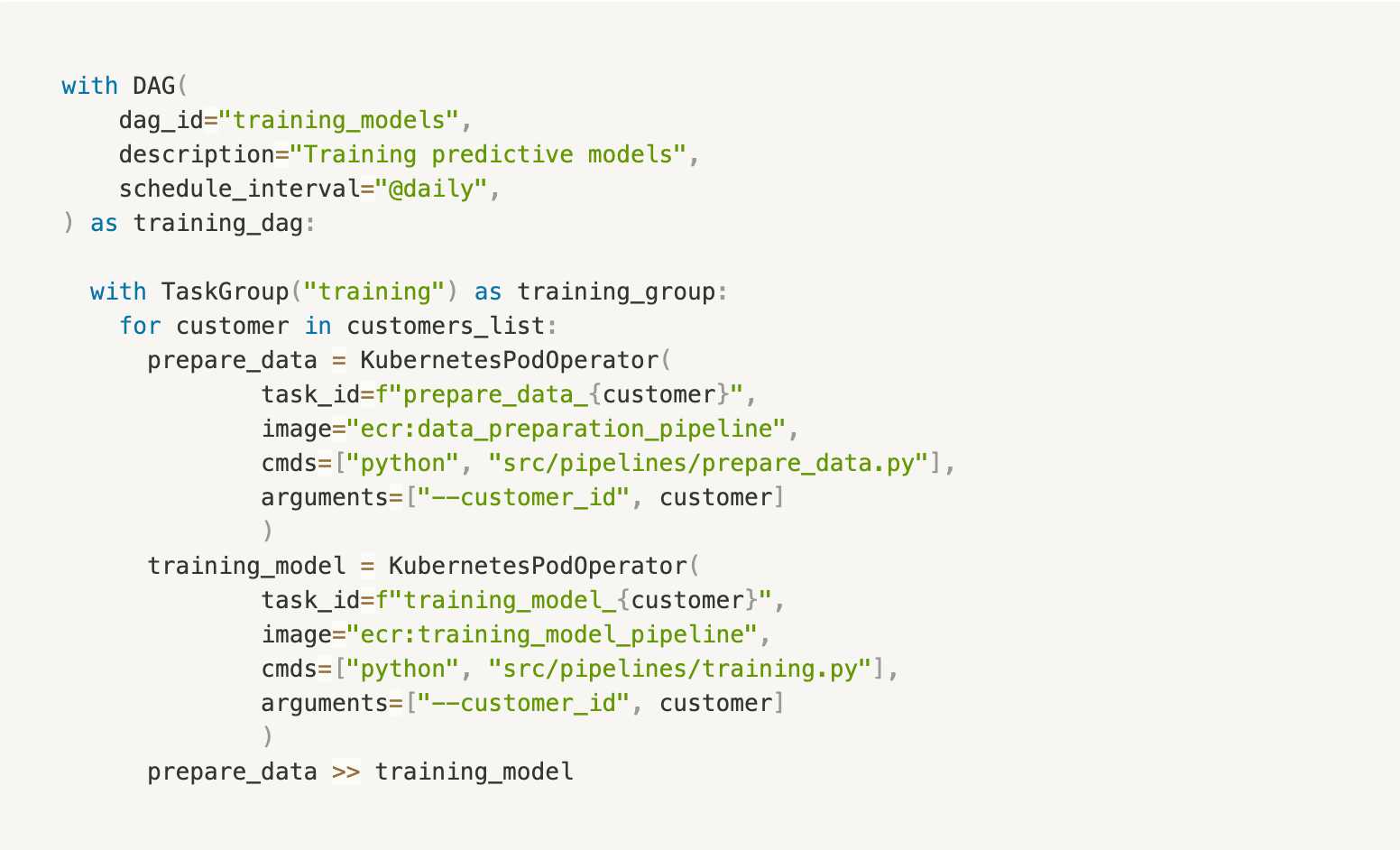

In DAGs, we use KubernetesPodOperator along with TaskGroup to dynamically create as many tasks as needed to segregate customer data in their own Kubernetes Pod.

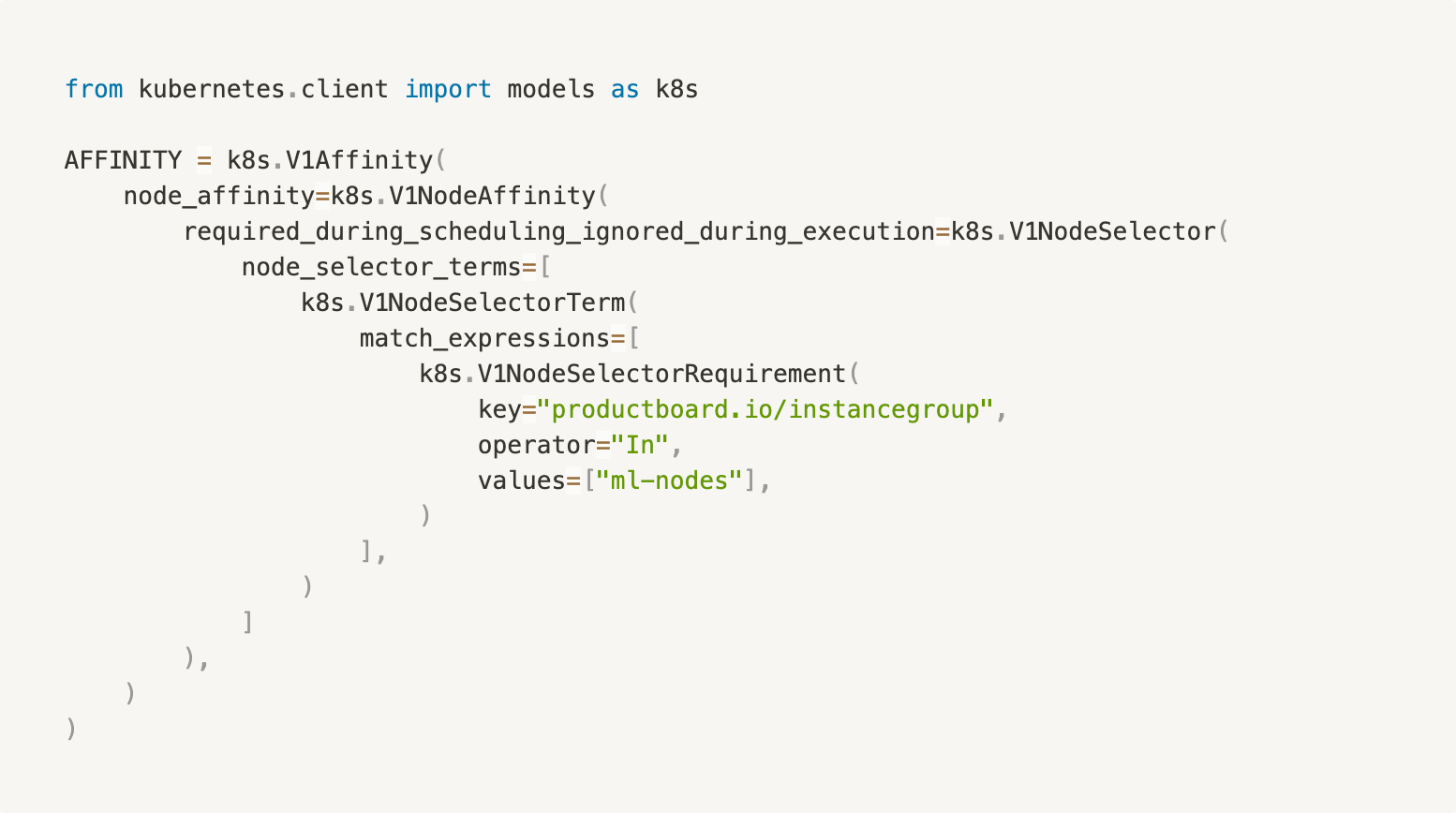

Airflow is a Kubernetes-friendly project, so we can use constraints such as affinities, tolerations, annotations, concurrency, and resource management for each task to make sure we have our workloads in control. One example could be defining the node where the tasks should run:

Currently, we run around 5,000 tasks per day, using nodes with c5.2xlarge instances. Our minimum node size is zero, while the maximum is 20. If there are currently no tasks running, we don’t waste any money. If there is a high-peak of queued tasks, autoscaling is triggered, and Airflow tasks take as many resources as needed. We also use a research nodes group for tasks that are unpredictable so they don’t draw resources from production workflows.

Even though one might question why a data scientist would deploy and maintain such a system, we found ourselves enjoying this journey. It gives us a lot of independence and ownership over our ML workflows and brings us closer to production. We also feel very positive about how other companies are using Airflow, which gives us more confidence to tackle more complex ML systems in the future.

—

Do you want to help our Machine Learning team manage current growth? Join Productboard as a Machine learning Team lead or Machine Learning Engineer.🚀

Interested in joining our growing team in a different role? Well, we’re hiring across the board! Check out our careers page for the latest vacancies.