7 lessons learned from 5 years of product-led experimentation

How do you run product-led growth experiments in your company? Do you have a clearly defined system? Or do you use trial and error with little to no structure and hope the most promising ideas stick?

Having a clear cut process in running experiments is critical for unlocking the full growth potential of your product and getting the most of your team’s efforts. However, multiple decision-makers, conflicting priorities, lack of data, and uncertainty can make it challenging to determine which product-led growth (PLG) experiments to run, and how.

In this post, I’ll share with you the hard lessons I’ve learned from my product-led experimentation journey. In the last five years, I’ve helped giants like Avast scale their growth programs to over $100 million in recurring revenue. Currently, I am responsible for running product-driven growth for productboard.

Let’s dive right into the lessons!

1. Don’t throw spaghetti at the wall

We all know that the trial and error method for product experimentation is a waste of time and money. This unstructured approach to growth can have severe consequences on your team’s morale, not to mention the overall perception of the team’s value within your company. You risk demotivating and frustrating your engineers and designers, especially if they are new to product growth. At the same time, your whole company will lose trust in the growth team and challenge its purpose. Even more discouraging, your stakeholders will question your ability to make a positive impact on the business.

So, what should you do instead of throwing spaghetti at the wall and hoping it sticks?

You should holistically define the areas that have the highest potential for making an impact on growth. One way to do this is to organize your ideas into growth themes.

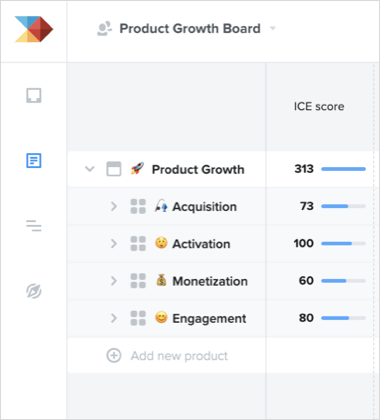

At productboard, we use our own product to run our experimentation program. Within our product hierarchy board, we organize our experiments into the following growth themes:

- Acquisition — experiments related to driving user acquisition such as signup flows, acquisition landing pages, gated content, acquisition models, and so on.

- Activation — experiments that can help users reach an “aha” moment and start forming a habit with your product.

- Monetization — experiments that increase the value captured by your product, such as pricing and packaging, paywalls, upsell paths, and so on.

- Engagement — experiments related to increasing product usage frequency, as well as experiments to keep your customers retained and successful.

After identifying your growth themes, the next step is to figure out which theme holds the biggest growth opportunities by running data-driven growth analysis, looking into under-optimized areas, and building growth models for individual ideas.

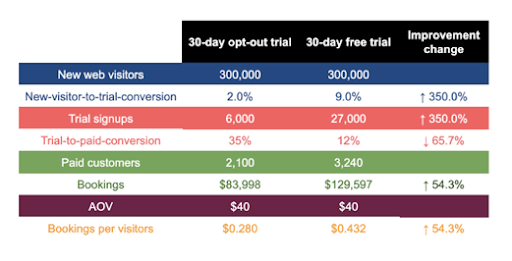

Growth models can help quantitatively separate the input of your growth ideas from the output. For example, what would happen if we experiment with removing billing requirements from the trial signup process (free trial vs. opt-out signup)? By feeding the model with external benchmark data from products in similar industries, I see that I can potentially generate a 50% uplift in my bookings per visitor.

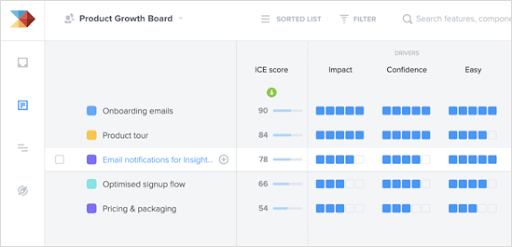

Once you model out the impact of your most promising ideas, you can plug them into the ICE (Impact, Confidence, Ease) framework and prioritize which features to start with.

At productboard, we conduct a version of the ICE framework using our custom prioritization score to help us decide which experiments to start with:

2. Don’t blindly copy best practices or someone else’s successful experiments

User motivation and ability vary from product to product and persona to persona. Different audiences have different needs, problems, or jobs they want to hire your product to solve. Tactics that work for your growth friend maybe won’t work for you. You must separate universal best practices from the ideas that simply do not apply to your case.

Fortunately, there’s a more reliable way to approach PLG experiments. First and foremost, you must understand the ability, motivation, and jobs your users are trying to solve with your product. What are their pain points? How easy is it for them to use your product to solve them?

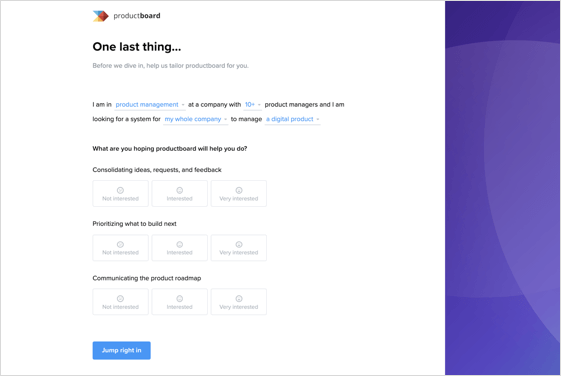

At productboard, we ask questions in a signup survey. This helps us segment our users and personalize their onboarding experience.

We also run deeper user research using user testing and session recording tools like Fullstory to gauge whether our users can accomplish their desired outcome during onboarding. Specifically, we look for usability issues and points of confusion.

In addition to surveys and customer interviews, we also use productboard to consolidate all ongoing feedback from colleagues and users as inputs to help us ideate and prioritize product growth experiments under different themes.

3. Don’t fall in love with your ideas. Put them into a hypothesis statement.

You think you have a brilliant experiment idea — something that no one else would ever think of. You have your heart set on testing it. You move it to the top of your backlog. You test it, and boom, it’s a slap on the face. Your experiment failed.

Ideas always sound better than they really are. We steer away from validating them and ignore risks to avoid getting our ego bruised.

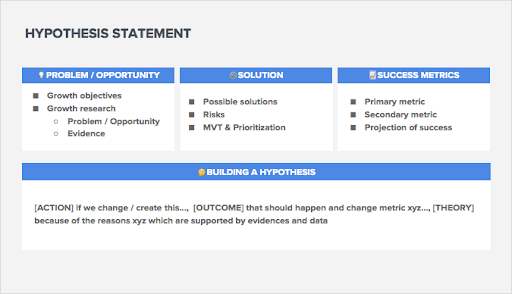

To emotionally disconnect from your ideas, build a hypothesis statement. This is the first step in exposing your brilliant ideas to the real world.

Step 1: Define growth objectives and carry out research

When building a hypothesis statement, you should start with a growth objective that guides the direction of your research.

Your research can reveal problems or untapped opportunities that limit your growth. It is important to collect multiple pieces of qualitative and quantitative evidence that validate the problems you identify.

Step 2: Propose solutions and identify risks

Once you know the problem well, you move to the solution stage.

At this step, you list all of the possible solutions for the problem along with a list of risks that could potentially prevent your solution from delivering the desired outcome.

Step 3: Set success metrics

The success of the solution you choose will be measured by a primary metric you are trying to move. This metric should be tied to your objective.

Secondary metrics are supportive metrics that can help you draw quicker learnings about your experiment.

Step 4: Formulate your hypothesis statement

Once you get your problem, solution, and success metrics right, it is time to start building your hypothesis statement.

The ACTION of your hypothesis is driven by the solution you choose.

The OUTCOME is the causal effect of your solution and how it contributes to your objective.

The THEORY explains why your hypothesis is worth testing.

Few companies have developed the habit of building hypotheses from scratch. We tend to jump straight to a solution before we understand the problem it solves or its alignment with global growth objectives.

To ensure you have a solid idea, you have the responsibility to reverse engineer your ideas into problems and objectives, as well as to collect research data that can support the hypothesis.

Hypothesis statement formulation is just the start. The next step is to know how you should deal with weak experiments.

4. Don’t wait too long to call your experiments when your traffic is low

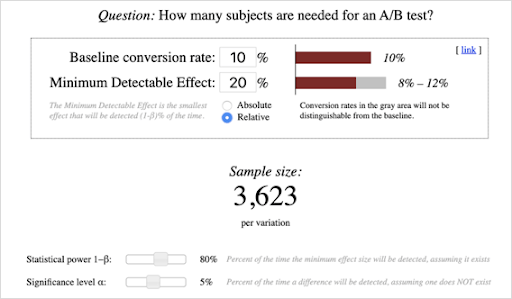

When working for Avast, a B2C antivirus software, I had the luxury of having more than 400 million users to target with my PLG experiments. Reaching statistical validity for my experiments could be done in just a few days (if not a few hours). A 5% uplift in conversion had an impressive impact on revenue, so it was worth the time!

Unfortunately, in B2B, if you don’t have a freemium acquisition model, your user activity volume is likely to be on the lower end. This means you’ll need to wait for several weeks or months in order to detect even double-digit uplifts. In that case, waiting for statistical validity for an experiment that could yield only a minor improvement is an overkill.

This raises the question: Should you run experiments if your traffic is low?

In B2B products with a trial-driven onboarding process and traffic lower than 50K visitors per month, aim for sample size calculations above 20% relative improvement.

If you reach the calculated sample size with a lower desired improvement threshold, use Bayesian statistics to calculate the “probability to be best” and call the test sooner to move on to an experiment with higher potential.

In low-volume B2B startups, don’t waste time experimenting with high certainty optimizations supported by research. Just take the risk of rolling them out and observe the data on a time series basis. There is no time to be extra careful.

5. Prioritize outcomes over incremental learning.

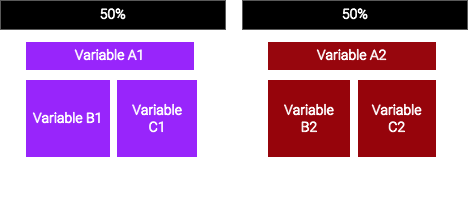

A common practice in product-led experimentation is to run A/B tests on incremental changes.

Let’s say I want to improve my onboarding tour copy AND layout. I’d test both components separately so I can learn which variable is going to help increase the onboarding completion rate. While this methodology is sound, experimenting with isolated variables usually has a long testing cycle, especially if you have limited user activity.

Optimizing purely for learning can delay the outcomes.

To avoid this, make bigger bets by combining multiple changes that have a high likelihood of creating a positive impact when united into a single experiment.

Combined variables can have interaction effects that boost your desired outcome. For example, my new copy and new layout together could have an interaction effect that drives better overall user activation.

There could be variables that take high effort to implement, so learning about their impact through incremental iterations would be valuable.

6. Sometimes friction is good

Most conversion rate optimization experts frame friction as an evil to be removed so the user journey is as smooth as possible (and thus converts more users). If I reduce the number of form fields, my conversion rate will increase. If I reduce the number of steps, users won’t drop off.

In many cases, removing friction can really help. So why is it not always a good idea?

Because the quality of conversions is almost always more important than quantity.

If you have a gated content piece — a free resource, eBook or report — adding a bit of friction by asking for relevant information can increase the quality of your leads. If you have a B2B SaaS targeting mid-market and enterprise accounts, requiring users to input their company email might also help filter your target customers.

Adding friction by asking users to install a snippet, connect with a third-party app, or give permission can improve the onboarding process and benefit activation. Additionally, asking users what they want to accomplish with your product can serve as data input for onboarding segmentation.

Lastly, you can use friction to increase a user’s investment in your product, making them stick around longer.

7. Get everyone in your organization involved with product-led growth experiments

Product-led growth is not only the job of product managers — it’s the job of multiple functions within an organization. Sales, marketing, customer success, and customer support are crucial for gathering insights on your customer, a vital part of a holistic growth experimentation process. Ultimately, their combined knowledge will help you craft PLG experiments that create real value for your customers.

It’s your responsibility to get diverse stakeholders on board, align them on your growth experiments, and share results and learnings as you go.

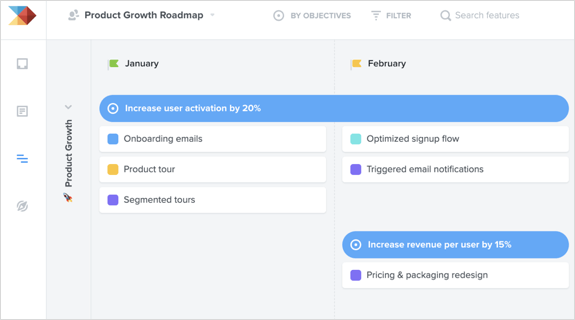

The roadmap board in productboard can help you communicate your growth experiments visually. Documenting and making your roadmap accessible to your company will ensure that everybody is on the same page, as well as make it easier to get buy-in from all departments. You can create multiple views of your roadmap to cater to different stakeholders, and all will automatically remain up-to-date.

. . .

Running product-led growth experiments is an active and continuous process that requires multiple iterations and a careful understanding of your customers. Fortunately, through the right combination of a growth mindset, data-driven decisions, and documented systems, you can drive product growth and make your users successful.

I hope these seven lessons inspire you to start building and running solid product-led growth experiments that yield rich results!

. . .

productboard is a product management system that enables teams to get the right products to market faster. Built on top of the Product Excellence framework, productboard serves as the dedicated system of record for product managers and aligns everyone on the right features to build next. Access a free trial of productboard today.